Using AI for coding

The current discussion around AI is quite a divisive one. Some people think we shouldn't touch it at all, and some dive head-first into using it for everything. I'm going to speak about one small part of this, the part that impacts my life. How good Claude Opus 4.5, by Anthropic, is at coding. It has legitimately become a tool that I regularly use in my day-to-day activities.

How did we get here?

Just a few short years ago no one ever thought that AI would be taking over everything the way it is. Especially in programming, where it seems to have had the biggest impact.

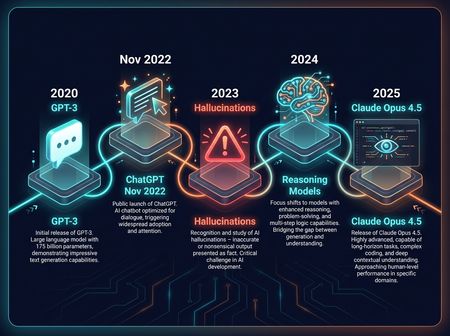

The original ChatGPT, released on November 30, 2022, was an impressive piece of technology. It was amazing to watch as this text box gave you reasonable answers to questions and could write little stories. It also hallucinated things that just were not real. If you know how these things work this makes sense.

These hallucinations prevented the original ChatGPT from being useful for important tasks but was a fun toy to play with. As new versions released, they trained it on larger and larger data sets. It was all about trying to make the models denser and "smarter." This changed when someone came up with reasoning models.

That brings us to where we are today. Every company is competing on how much it can stuff into the LLM, how many parameters it has, and its internal feedback and reasoning methods to keep all that in check. This has led to the new models being very capable and much more trustworthy in their output which brings us to the point of this post: Claude Opus 4.5. With Claude Opus 4.5 we've gone from AI being a tool you can use to make some tasks easier to AI being actually good at writing code. But first, it helps to understand a few things.

How do they work

Before we get into how useful LLMs are we should cover how they work. There are a lot of misconceptions about what our current AI is, what it's capable of, but more specifically what it is not. Our current AI is not smart. It has no understanding of what we are talking about. What it produces has no meaning to the AI. It's just good at making us think it does. This will make more sense in a minute.

LLMs are an extension of something we've been doing for decades. Machine learning. Given some set of inputs, predicting the output. If you have specific health markers, predict the medical outcome. Given a set of images from a camera, predict that the object is not aligned correctly and adjust. You can see how useful these tasks are. Predicting cancer in a patient. Making sure a car part is aligned correctly on a workspace for a robot to perform tasks. LLMs are an extension of this, with a vastly increased set of inputs.

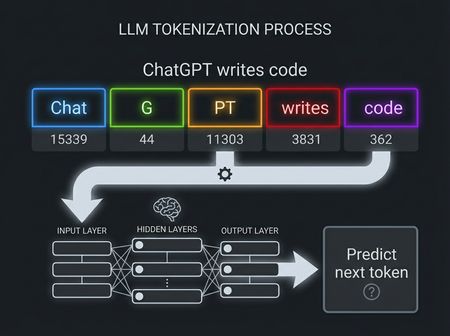

The inputs are broken down into numbers, called tokens. If you've ever wondered what tokens are now you know. They are a number that represents some part of language. Without going into too many details a token is a subword component. Sometimes whole words, sometimes just a part of a word. If you want to know a little more about how tokens are broken down you can visit https://platform.openai.com/tokenizer . Once it's converted to a number you feed all that input into the LLM and it predicts the next token. That's it: one token. To get the next token it has to feed the entire message back into the LLM. That's a lot of processing for just 1 token. So keep that in mind, if ChatGPT produces 100 words (~130 tokens), it runs this loop 130 times.

Reasoning adds onto that overhead by having the LLM use an internal chain-of-thought, adding internal reasoning steps to assist in making a better prediction. All of this is far more complicated in practice but that's essentially how it works. I'm obviously leaving out a lot here, but there are plenty of research papers out there if you want to learn more.

What that means for coding

Since all LLMs do is predict the next token, and programming follows some sort of logic, it's not hard to imagine it producing code. Ask it for a function written in C++ and there are only so many ways to put that together. Train one of these models on descriptions of code and the code itself and you get something that can start writing something pretty coherent. Up until recently AI would be good for autocomplete tasks. Like figuring out the rest of a line you started typing, or refactoring to use different variable names. That really changed with Claude Opus 4.5.

Claude Opus 4.5 really had two major improvements over previous coding models. Its reasoning token usage is much more efficient and it seems to really understand code. That means it can process code longer, with less tokens, to provide a much more accurate prediction of what code would produce the requested output. That makes it way more useful when using it while coding. You can ask it to do far more complicated tasks and it will produce something that is likely to work. Need a new feature added to your app? Just ask Opus to do it, it'll surprise you with how close it will get to doing it correctly.

What does this mean in practice? It means I will be using more assistance from AI when I'm coding in the future. I still think it's important to understand the fundamentals. Being able to read and understand the output from the models is still vitally important. I would never publish something I can't properly review myself. However, it will vastly increase how much you can produce. It will also help get new users caught up on complex code bases.

I know there is some hesitancy around using AI these days, but we need to find the correct way to use the tools given to us. I don't think we should be replacing devs with AI. But changing what devs do during the day? That's something that will change. Claude Opus 4.5 will not replace developers. However, I will be adding it to my current toolkit.